Google Revamps Its Browser User Agent Team in Response to OpenClaw Frenzy

Google is transforming the team responsible for Project Mariner, its AI agent designed to navigate the Chrome browser and execute tasks for users, according to WIRED. Recently, several Google Labs employees involved in the research prototype have transitioned to higher-priority initiatives, as indicated by two sources familiar with the situation.

A spokesperson from Google confirmed the staff changes, noting that the capabilities developed under Project Mariner will be integrated into the company’s broader agent strategy going forward. The spokesperson also mentioned that some of these features have already been incorporated into other agent offerings, including the newly released Gemini Agent.

This shift occurs as Google and other AI labs strive to keep pace with the emergence of advanced agents like OpenClaw. Although these tools are primarily utilized by developers today, Silicon Valley anticipates they could soon serve as general-purpose assistants for individuals and businesses. Nvidia CEO Jensen Huang likened this innovative tool to a novel operating system for agent-driven computers, stating, “Every company in the world today needs to have an OpenClaw strategy,” during the recent developer conference.

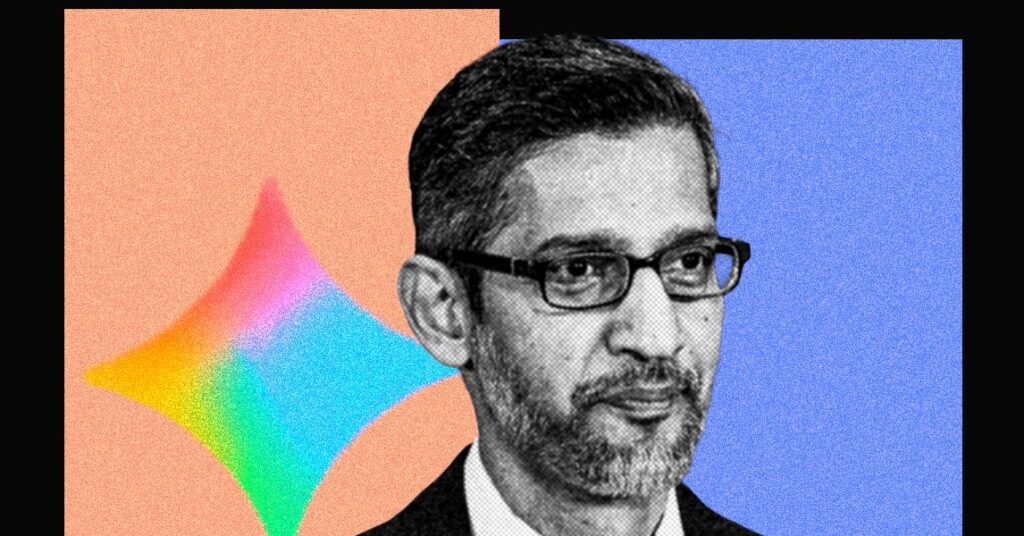

At last year’s I/O conference, Google CEO Sundar Pichai showcased Project Mariner. At that point, browser agents appeared to be the industry’s next significant gamble, with OpenAI and Perplexity unveiling user-oriented agents that claimed to automate online tasks. These agents could perform actions like clicking, scrolling, and filling out forms, mimicking human behavior. However, the uptake of these products has not met industry expectations.

Perplexity’s Comet browser agent had a mere 2.8 million weekly active users as of December 2025. In contrast, OpenAI’s ChatGPT Agent reportedly dipped to below 1 million weekly active users in recent months. When measured against the hundreds of millions of weekly users interacting with ChatGPT, the use of browser agents is almost negligible.

New Agents in the Field

The AI landscape has shifted significantly in the past year towards agents like Claude Code and OpenClaw (whose creator was recruited by OpenAI). Unlike traditional web-browsing agents, these systems command computers via the command line, which has proven to be a more reliable method for task execution. Some of these products incorporate computer use as a feature, among other agent capabilities. In this context, browser agents now appear limited as standalone solutions.

Kian Katanforoosh, CEO of the AI-upskilling platform Workera and an AI lecturer at Stanford, observes that the slow uptake of computer use agents can be attributed to their substantial computational demands. Most of these agents operate by capturing a series of screenshots of a webpage, processing that input through an AI model, and then acting on what they interpret. This data processing can be sluggish and at times inconsistent.

“What Claude Code and OpenClaw demonstrated is that working with the terminal is considerably more efficient, as both the terminal and LLMs are text-based,” Katanforoosh noted. “It probably takes 10 to 100 times fewer steps to achieve the same results.”

This does not imply that browser agents aren’t advancing or that research into computer use has reached an impasse.

Recently, the startup Standard Intelligence unveiled a computer use model trained on videos instead of screenshots. The startup claims to have developed a video encoder that compresses videos into an AI model’s context window, boasting a 50X efficiency improvement over earlier computer use models. To illustrate its AI model’s capabilities, the startup connected it to a vehicle, a live video feed, and a computer keyboard, successfully demonstrating brief autonomous driving around San Francisco.